Note

Go to the end to download the full example code.

Histogram Binning, Isotonic Regression, and BBQ tutorial#

This notebook-style script demonstrates how to use existing post-processing scalers from the package to calibrate a pretrained ResNet-18 on CIFAR-100.

1. Loading the Utilities#

We import: - CIFAR100DataModule for data handling - CalibrationError to compute ECE and plot reliability diagrams - the resnet builder and load_hf to fetch pretrained weights - BBQScaler, HistogramBinningScaler, and IsotonicRegressionScaler to calibrate predictions

import torch

from torch.utils.data import DataLoader, random_split

from torch_uncertainty.datamodules import CIFAR100DataModule

from torch_uncertainty.metrics import CalibrationError

from torch_uncertainty.models.classification import resnet

from torch_uncertainty.post_processing import (

BBQScaler,

HistogramBinningScaler,

IsotonicRegressionScaler,

)

from torch_uncertainty.utils import load_hf

2. Loading a pretrained model from the hub#

Build a ResNet-18 (CIFAR style) and download pretrained weights from the hub. The returned config isn’t required for this demo but is shown for completeness.

model = resnet(in_channels=3, num_classes=100, arch=18, style="cifar", conv_bias=False)

weights, config = load_hf("resnet18_c100")

model.load_state_dict(weights)

model = model.eval()

3. Setting up the Datamodule and Dataloaders#

Prepare CIFAR-100 test set and create DataLoaders. We split the test set into a calibration subset and a held-out test subset for reliable ECE computation.

dm = CIFAR100DataModule(root="./data", eval_ood=False, batch_size=32)

dm.prepare_data()

dm.setup("test")

dataset = dm.test

cal_dataset, test_dataset = random_split(dataset, [5000, len(dataset) - 5000])

test_dataloader = DataLoader(test_dataset, batch_size=128)

calibration_dataloader = DataLoader(cal_dataset, batch_size=128)

0%| | 0.00/169M [00:00<?, ?B/s]

0%| | 65.5k/169M [00:00<07:23, 381kB/s]

0%| | 229k/169M [00:00<03:52, 726kB/s]

0%| | 426k/169M [00:00<02:44, 1.03MB/s]

1%| | 1.08M/169M [00:00<01:07, 2.48MB/s]

1%| | 1.47M/169M [00:00<00:58, 2.87MB/s]

1%| | 1.93M/169M [00:00<00:50, 3.34MB/s]

1%|▏ | 2.33M/169M [00:00<00:52, 3.20MB/s]

2%|▏ | 2.75M/169M [00:01<00:48, 3.44MB/s]

2%|▏ | 3.18M/169M [00:01<00:45, 3.66MB/s]

2%|▏ | 3.67M/169M [00:01<00:45, 3.63MB/s]

2%|▏ | 4.10M/169M [00:01<00:43, 3.77MB/s]

3%|▎ | 4.55M/169M [00:01<00:41, 3.98MB/s]

3%|▎ | 5.05M/169M [00:01<00:38, 4.21MB/s]

3%|▎ | 5.51M/169M [00:01<00:41, 3.92MB/s]

4%|▎ | 5.96M/169M [00:01<00:40, 4.07MB/s]

4%|▍ | 6.49M/169M [00:01<00:37, 4.36MB/s]

4%|▍ | 6.95M/169M [00:02<00:39, 4.10MB/s]

4%|▍ | 7.41M/169M [00:02<00:38, 4.17MB/s]

5%|▍ | 7.93M/169M [00:02<00:36, 4.44MB/s]

5%|▌ | 8.45M/169M [00:02<00:37, 4.32MB/s]

5%|▌ | 8.91M/169M [00:02<00:36, 4.36MB/s]

6%|▌ | 9.40M/169M [00:02<00:35, 4.48MB/s]

6%|▌ | 9.90M/169M [00:02<00:34, 4.57MB/s]

6%|▌ | 10.4M/169M [00:02<00:34, 4.57MB/s]

6%|▋ | 10.9M/169M [00:02<00:34, 4.59MB/s]

7%|▋ | 11.4M/169M [00:03<00:34, 4.60MB/s]

7%|▋ | 11.9M/169M [00:03<00:32, 4.77MB/s]

7%|▋ | 12.5M/169M [00:03<00:33, 4.73MB/s]

8%|▊ | 12.9M/169M [00:03<00:33, 4.72MB/s]

8%|▊ | 13.4M/169M [00:03<00:33, 4.69MB/s]

8%|▊ | 14.0M/169M [00:03<00:31, 4.85MB/s]

9%|▊ | 14.5M/169M [00:03<00:31, 4.85MB/s]

9%|▉ | 15.0M/169M [00:03<00:32, 4.79MB/s]

9%|▉ | 15.5M/169M [00:03<00:32, 4.78MB/s]

9%|▉ | 16.0M/169M [00:03<00:31, 4.89MB/s]

10%|▉ | 16.5M/169M [00:04<00:30, 4.98MB/s]

10%|█ | 17.1M/169M [00:04<00:30, 4.95MB/s]

10%|█ | 17.6M/169M [00:04<00:31, 4.85MB/s]

11%|█ | 18.1M/169M [00:04<00:31, 4.86MB/s]

11%|█ | 18.6M/169M [00:04<00:30, 4.95MB/s]

11%|█▏ | 19.2M/169M [00:04<00:29, 5.10MB/s]

12%|█▏ | 19.7M/169M [00:04<00:29, 5.00MB/s]

12%|█▏ | 20.2M/169M [00:04<00:30, 4.88MB/s]

12%|█▏ | 20.7M/169M [00:04<00:29, 4.95MB/s]

13%|█▎ | 21.3M/169M [00:05<00:29, 5.03MB/s]

13%|█▎ | 21.8M/169M [00:05<00:29, 5.07MB/s]

13%|█▎ | 22.3M/169M [00:05<00:30, 4.84MB/s]

14%|█▎ | 22.9M/169M [00:05<00:29, 4.95MB/s]

14%|█▍ | 23.4M/169M [00:05<00:28, 5.11MB/s]

14%|█▍ | 24.0M/169M [00:05<00:28, 5.14MB/s]

14%|█▍ | 24.5M/169M [00:05<00:29, 4.85MB/s]

15%|█▍ | 25.0M/169M [00:05<00:28, 4.99MB/s]

15%|█▌ | 25.6M/169M [00:05<00:28, 5.09MB/s]

15%|█▌ | 26.1M/169M [00:05<00:27, 5.13MB/s]

16%|█▌ | 26.6M/169M [00:06<00:29, 4.86MB/s]

16%|█▌ | 27.2M/169M [00:06<00:28, 5.01MB/s]

16%|█▋ | 27.8M/169M [00:06<00:27, 5.12MB/s]

17%|█▋ | 28.3M/169M [00:06<00:27, 5.10MB/s]

17%|█▋ | 28.8M/169M [00:06<00:28, 4.88MB/s]

17%|█▋ | 29.3M/169M [00:06<00:28, 4.99MB/s]

18%|█▊ | 29.9M/169M [00:06<00:27, 5.09MB/s]

18%|█▊ | 30.4M/169M [00:06<00:27, 5.11MB/s]

18%|█▊ | 30.9M/169M [00:06<00:26, 5.12MB/s]

19%|█▊ | 31.5M/169M [00:07<00:28, 4.88MB/s]

19%|█▉ | 32.0M/169M [00:07<00:27, 5.06MB/s]

19%|█▉ | 32.5M/169M [00:07<00:26, 5.10MB/s]

20%|█▉ | 33.1M/169M [00:07<00:26, 5.07MB/s]

20%|█▉ | 33.6M/169M [00:07<00:27, 4.96MB/s]

20%|██ | 34.1M/169M [00:07<00:27, 4.94MB/s]

21%|██ | 34.7M/169M [00:07<00:26, 5.07MB/s]

21%|██ | 35.2M/169M [00:07<00:26, 5.10MB/s]

21%|██ | 35.7M/169M [00:07<00:26, 5.04MB/s]

21%|██▏ | 36.2M/169M [00:08<00:27, 4.91MB/s]

22%|██▏ | 36.8M/169M [00:08<00:26, 4.98MB/s]

22%|██▏ | 37.3M/169M [00:08<00:25, 5.12MB/s]

22%|██▏ | 37.8M/169M [00:08<00:25, 5.14MB/s]

23%|██▎ | 38.4M/169M [00:08<00:25, 5.05MB/s]

23%|██▎ | 38.9M/169M [00:08<00:26, 4.98MB/s]

23%|██▎ | 39.4M/169M [00:08<00:25, 5.03MB/s]

24%|██▎ | 39.9M/169M [00:08<00:25, 5.08MB/s]

24%|██▍ | 40.5M/169M [00:08<00:24, 5.19MB/s]

24%|██▍ | 41.0M/169M [00:08<00:25, 5.06MB/s]

25%|██▍ | 41.5M/169M [00:09<00:25, 5.04MB/s]

25%|██▍ | 42.1M/169M [00:09<00:25, 5.07MB/s]

25%|██▌ | 42.6M/169M [00:09<00:24, 5.14MB/s]

26%|██▌ | 43.2M/169M [00:09<00:24, 5.21MB/s]

26%|██▌ | 43.7M/169M [00:09<00:24, 5.11MB/s]

26%|██▌ | 44.2M/169M [00:09<00:24, 5.11MB/s]

26%|██▋ | 44.8M/169M [00:09<00:24, 5.14MB/s]

27%|██▋ | 45.3M/169M [00:09<00:23, 5.23MB/s]

27%|██▋ | 45.9M/169M [00:09<00:23, 5.28MB/s]

27%|██▋ | 46.4M/169M [00:10<00:23, 5.16MB/s]

28%|██▊ | 47.0M/169M [00:10<00:23, 5.19MB/s]

28%|██▊ | 47.5M/169M [00:10<00:23, 5.20MB/s]

28%|██▊ | 48.1M/169M [00:10<00:22, 5.36MB/s]

29%|██▉ | 48.6M/169M [00:10<00:22, 5.37MB/s]

29%|██▉ | 49.2M/169M [00:10<00:22, 5.40MB/s]

29%|██▉ | 49.7M/169M [00:10<00:22, 5.27MB/s]

30%|██▉ | 50.3M/169M [00:10<00:22, 5.35MB/s]

30%|███ | 50.9M/169M [00:10<00:21, 5.48MB/s]

30%|███ | 51.5M/169M [00:10<00:21, 5.55MB/s]

31%|███ | 52.1M/169M [00:11<00:21, 5.44MB/s]

31%|███ | 52.6M/169M [00:11<00:21, 5.44MB/s]

31%|███▏ | 53.2M/169M [00:11<00:21, 5.51MB/s]

32%|███▏ | 53.8M/169M [00:11<00:20, 5.67MB/s]

32%|███▏ | 54.5M/169M [00:11<00:19, 5.75MB/s]

33%|███▎ | 55.1M/169M [00:11<00:20, 5.63MB/s]

33%|███▎ | 55.6M/169M [00:11<00:20, 5.67MB/s]

33%|███▎ | 56.2M/169M [00:11<00:19, 5.72MB/s]

34%|███▎ | 56.9M/169M [00:11<00:19, 5.89MB/s]

34%|███▍ | 57.5M/169M [00:11<00:18, 5.95MB/s]

34%|███▍ | 58.1M/169M [00:12<00:18, 5.87MB/s]

35%|███▍ | 58.8M/169M [00:12<00:18, 5.95MB/s]

35%|███▌ | 59.4M/169M [00:12<00:18, 5.97MB/s]

36%|███▌ | 60.0M/169M [00:12<00:17, 6.13MB/s]

36%|███▌ | 60.7M/169M [00:12<00:17, 6.19MB/s]

36%|███▋ | 61.3M/169M [00:12<00:17, 6.21MB/s]

37%|███▋ | 62.0M/169M [00:12<00:17, 6.28MB/s]

37%|███▋ | 62.7M/169M [00:12<00:17, 6.25MB/s]

37%|███▋ | 63.3M/169M [00:12<00:16, 6.39MB/s]

38%|███▊ | 64.0M/169M [00:13<00:16, 6.49MB/s]

38%|███▊ | 64.7M/169M [00:13<00:15, 6.53MB/s]

39%|███▊ | 65.4M/169M [00:13<00:15, 6.63MB/s]

39%|███▉ | 66.1M/169M [00:13<00:15, 6.74MB/s]

40%|███▉ | 66.8M/169M [00:13<00:15, 6.72MB/s]

40%|███▉ | 67.6M/169M [00:13<00:14, 6.93MB/s]

40%|████ | 68.3M/169M [00:13<00:14, 6.96MB/s]

41%|████ | 69.0M/169M [00:13<00:14, 7.04MB/s]

41%|████▏ | 69.8M/169M [00:13<00:13, 7.18MB/s]

42%|████▏ | 70.6M/169M [00:13<00:13, 7.20MB/s]

42%|████▏ | 71.3M/169M [00:14<00:13, 7.26MB/s]

43%|████▎ | 72.1M/169M [00:14<00:13, 7.40MB/s]

43%|████▎ | 72.9M/169M [00:14<00:12, 7.57MB/s]

44%|████▎ | 73.7M/169M [00:14<00:12, 7.62MB/s]

44%|████▍ | 74.6M/169M [00:14<00:12, 7.86MB/s]

45%|████▍ | 75.4M/169M [00:14<00:11, 7.87MB/s]

45%|████▌ | 76.2M/169M [00:14<00:11, 7.86MB/s]

46%|████▌ | 77.1M/169M [00:14<00:11, 8.04MB/s]

46%|████▌ | 78.0M/169M [00:14<00:11, 8.25MB/s]

47%|████▋ | 78.9M/169M [00:14<00:10, 8.43MB/s]

47%|████▋ | 79.8M/169M [00:15<00:10, 8.45MB/s]

48%|████▊ | 80.6M/169M [00:15<00:10, 8.57MB/s]

48%|████▊ | 81.6M/169M [00:15<00:10, 8.66MB/s]

49%|████▉ | 82.5M/169M [00:15<00:09, 8.75MB/s]

49%|████▉ | 83.4M/169M [00:15<00:09, 8.96MB/s]

50%|████▉ | 84.4M/169M [00:15<00:09, 9.09MB/s]

51%|█████ | 85.4M/169M [00:15<00:09, 9.28MB/s]

51%|█████ | 86.3M/169M [00:15<00:08, 9.42MB/s]

52%|█████▏ | 87.4M/169M [00:15<00:08, 9.60MB/s]

52%|█████▏ | 88.4M/169M [00:15<00:08, 9.78MB/s]

53%|█████▎ | 89.5M/169M [00:16<00:08, 9.91MB/s]

54%|█████▎ | 90.5M/169M [00:16<00:07, 10.1MB/s]

54%|█████▍ | 91.6M/169M [00:16<00:07, 10.2MB/s]

55%|█████▍ | 92.7M/169M [00:16<00:07, 10.4MB/s]

56%|█████▌ | 93.8M/169M [00:16<00:07, 10.5MB/s]

56%|█████▌ | 95.0M/169M [00:16<00:06, 10.7MB/s]

57%|█████▋ | 96.1M/169M [00:16<00:06, 10.9MB/s]

58%|█████▊ | 97.3M/169M [00:16<00:06, 11.0MB/s]

58%|█████▊ | 98.4M/169M [00:16<00:06, 11.1MB/s]

59%|█████▉ | 99.6M/169M [00:17<00:06, 11.3MB/s]

60%|█████▉ | 101M/169M [00:17<00:05, 11.4MB/s]

60%|██████ | 102M/169M [00:17<00:05, 11.5MB/s]

61%|██████ | 103M/169M [00:17<00:06, 9.90MB/s]

62%|██████▏ | 105M/169M [00:17<00:05, 11.4MB/s]

63%|██████▎ | 106M/169M [00:17<00:05, 10.5MB/s]

63%|██████▎ | 107M/169M [00:17<00:06, 10.1MB/s]

64%|██████▍ | 108M/169M [00:17<00:06, 9.64MB/s]

65%|██████▍ | 109M/169M [00:17<00:06, 8.88MB/s]

65%|██████▌ | 110M/169M [00:18<00:06, 8.49MB/s]

66%|██████▌ | 111M/169M [00:18<00:07, 8.22MB/s]

66%|██████▌ | 112M/169M [00:18<00:07, 7.92MB/s]

67%|██████▋ | 113M/169M [00:18<00:07, 7.86MB/s]

67%|██████▋ | 113M/169M [00:18<00:07, 7.83MB/s]

68%|██████▊ | 114M/169M [00:18<00:06, 8.03MB/s]

68%|██████▊ | 115M/169M [00:18<00:06, 8.23MB/s]

69%|██████▊ | 116M/169M [00:18<00:06, 8.65MB/s]

69%|██████▉ | 117M/169M [00:18<00:05, 9.10MB/s]

70%|██████▉ | 118M/169M [00:19<00:05, 9.49MB/s]

71%|███████ | 119M/169M [00:19<00:05, 9.80MB/s]

71%|███████▏ | 120M/169M [00:19<00:04, 10.1MB/s]

72%|███████▏ | 122M/169M [00:19<00:04, 10.3MB/s]

73%|███████▎ | 123M/169M [00:19<00:04, 10.5MB/s]

73%|███████▎ | 124M/169M [00:19<00:04, 10.6MB/s]

74%|███████▍ | 125M/169M [00:19<00:04, 10.7MB/s]

75%|███████▍ | 126M/169M [00:19<00:03, 10.8MB/s]

75%|███████▌ | 127M/169M [00:19<00:03, 10.9MB/s]

76%|███████▌ | 128M/169M [00:19<00:03, 11.0MB/s]

77%|███████▋ | 129M/169M [00:20<00:03, 11.1MB/s]

77%|███████▋ | 131M/169M [00:20<00:03, 11.2MB/s]

78%|███████▊ | 132M/169M [00:20<00:03, 11.2MB/s]

79%|███████▊ | 133M/169M [00:20<00:03, 11.3MB/s]

79%|███████▉ | 134M/169M [00:20<00:03, 11.4MB/s]

80%|████████ | 135M/169M [00:20<00:02, 11.4MB/s]

81%|████████ | 136M/169M [00:20<00:02, 11.4MB/s]

81%|████████▏ | 138M/169M [00:20<00:02, 11.5MB/s]

82%|████████▏ | 139M/169M [00:20<00:02, 11.6MB/s]

83%|████████▎ | 140M/169M [00:20<00:02, 11.6MB/s]

84%|████████▎ | 141M/169M [00:21<00:02, 11.6MB/s]

84%|████████▍ | 142M/169M [00:21<00:02, 11.6MB/s]

85%|████████▍ | 144M/169M [00:21<00:02, 11.7MB/s]

86%|████████▌ | 145M/169M [00:21<00:02, 11.7MB/s]

86%|████████▋ | 146M/169M [00:21<00:01, 11.7MB/s]

87%|████████▋ | 147M/169M [00:21<00:01, 11.7MB/s]

88%|████████▊ | 148M/169M [00:21<00:01, 11.7MB/s]

88%|████████▊ | 149M/169M [00:21<00:01, 11.7MB/s]

89%|████████▉ | 151M/169M [00:21<00:01, 11.7MB/s]

90%|████████▉ | 152M/169M [00:22<00:01, 11.7MB/s]

91%|█████████ | 153M/169M [00:22<00:01, 11.7MB/s]

91%|█████████ | 154M/169M [00:22<00:01, 9.29MB/s]

92%|█████████▏| 156M/169M [00:22<00:01, 11.9MB/s]

93%|█████████▎| 157M/169M [00:22<00:01, 10.7MB/s]

94%|█████████▍| 159M/169M [00:22<00:01, 10.2MB/s]

95%|█████████▍| 160M/169M [00:22<00:00, 9.90MB/s]

95%|█████████▌| 161M/169M [00:22<00:00, 9.67MB/s]

96%|█████████▌| 162M/169M [00:23<00:00, 9.55MB/s]

96%|█████████▋| 163M/169M [00:23<00:00, 9.51MB/s]

97%|█████████▋| 164M/169M [00:23<00:00, 9.47MB/s]

97%|█████████▋| 165M/169M [00:23<00:00, 9.45MB/s]

98%|█████████▊| 166M/169M [00:23<00:00, 9.43MB/s]

99%|█████████▊| 167M/169M [00:23<00:00, 9.50MB/s]

99%|█████████▉| 168M/169M [00:23<00:00, 9.56MB/s]

100%|█████████▉| 169M/169M [00:23<00:00, 9.61MB/s]

100%|██████████| 169M/169M [00:23<00:00, 7.11MB/s]

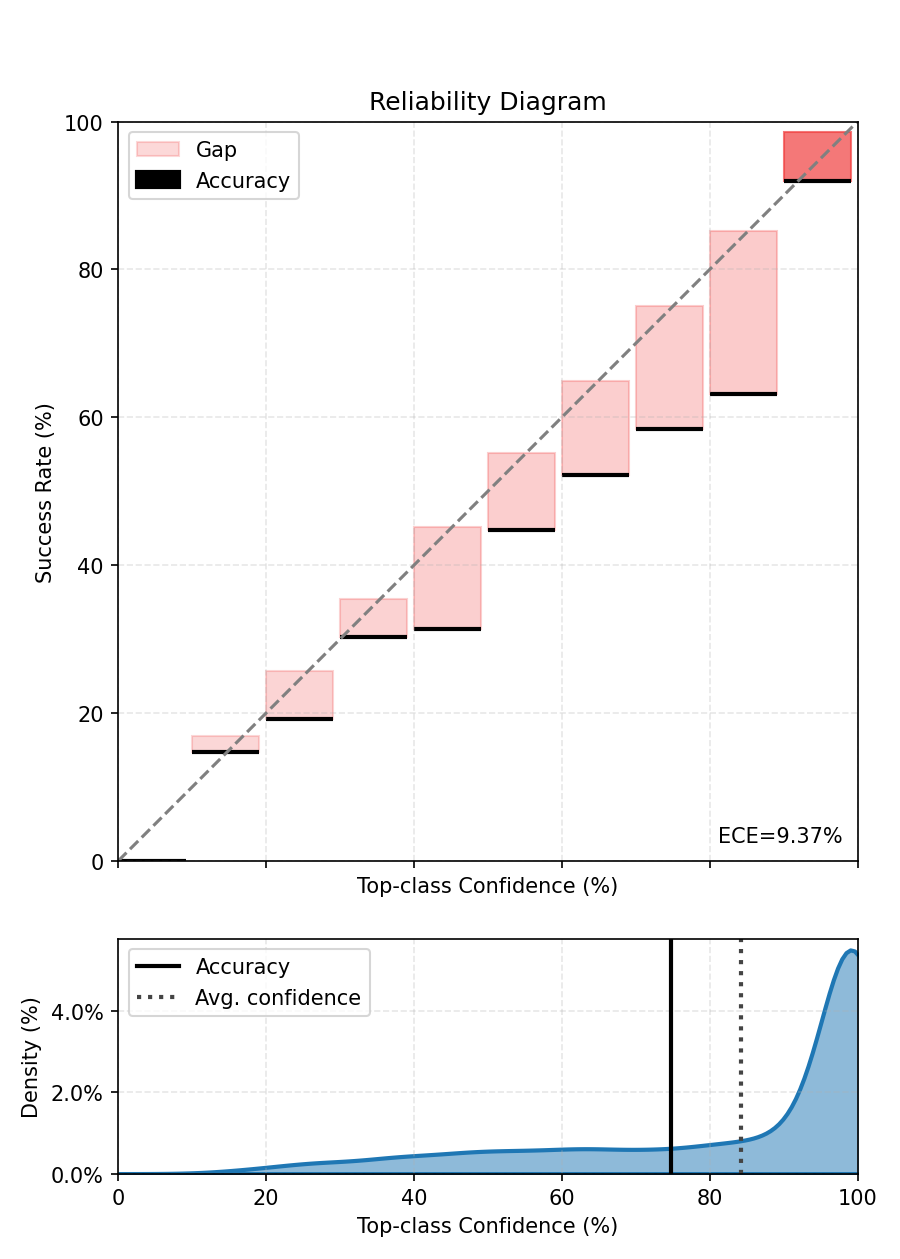

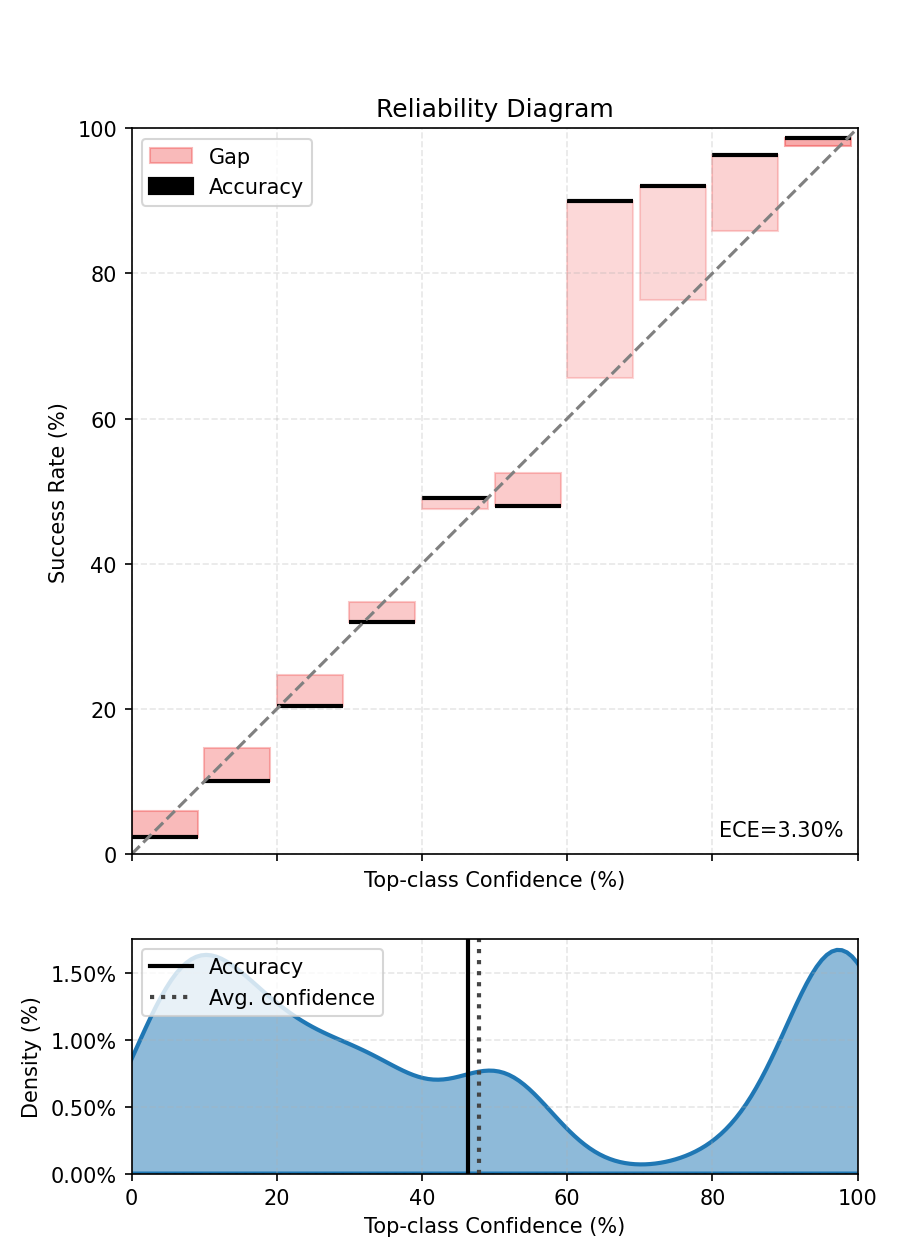

4. Baseline ECE (before any calibration)#

Compute the top-label ECE for the uncalibrated model to have a baseline. We feed probabilities (softmax over logits) to the metric.

ece = CalibrationError(task="multiclass", num_classes=100)

with torch.no_grad():

for sample, target in test_dataloader:

logits = model(sample)

probs = logits.softmax(-1)

ece.update(probs, target)

print(f"ECE before calibration - {ece.compute():.3%}.")

fig, ax = ece.plot()

fig.tight_layout()

fig.show()

ECE before calibration - 9.672%.

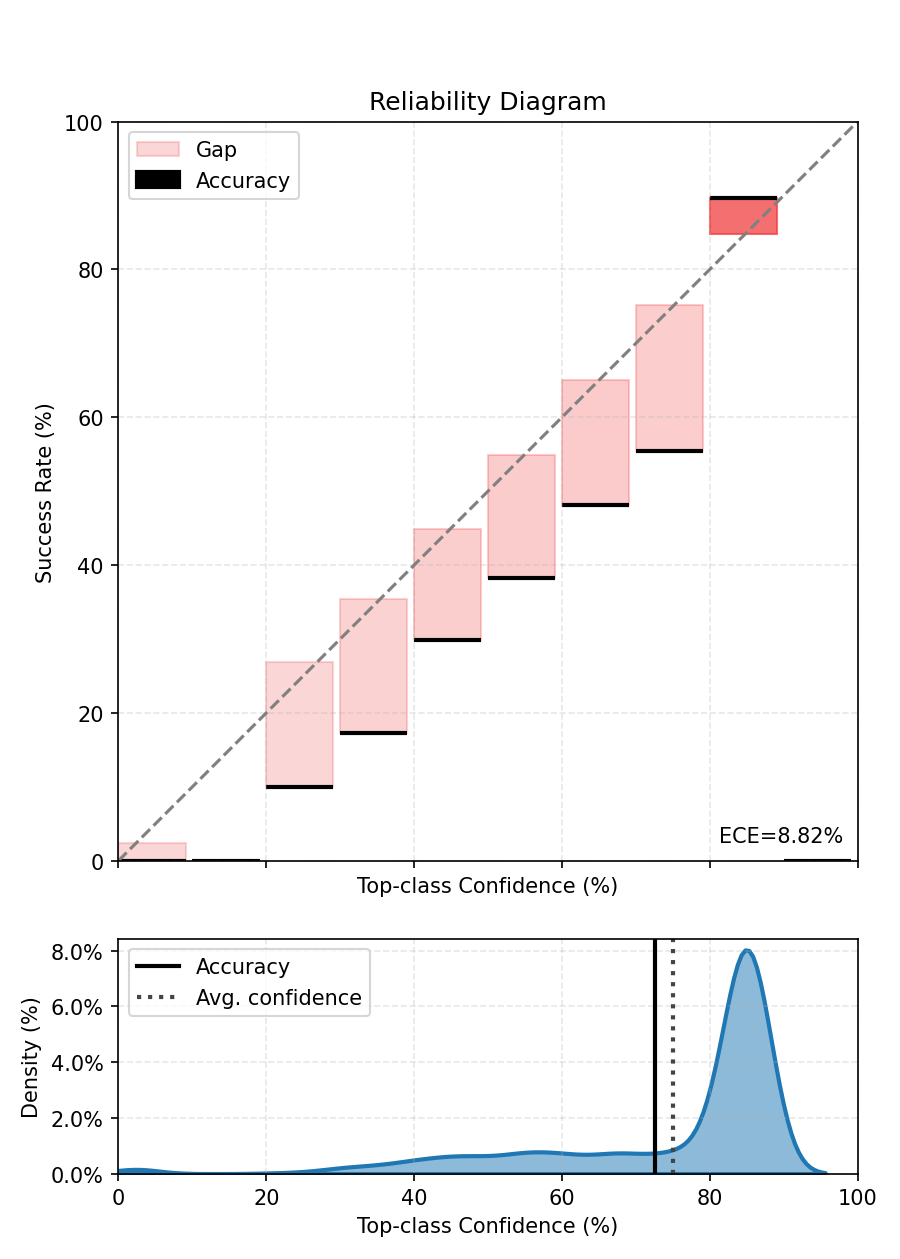

5. Bayesian Binning into Quantiles (BBQ): fit and evaluate#

bbq_scaler = BBQScaler(model=model, device=None)

bbq_scaler.fit(dataloader=calibration_dataloader)

# Evaluate bbq model on the held-out test set

ece.reset()

with torch.no_grad():

for sample, target in test_dataloader:

# For multiclass this scaler is expected to return log-probabilities; apply softmax.

calibrated_out = bbq_scaler(sample)

probs = calibrated_out.softmax(-1)

ece.update(probs, target)

print(f"ECE after BBQ Binning - {ece.compute():.3%}.")

fig, ax = ece.plot()

fig.tight_layout()

fig.show()

0%| | 0/40 [00:00<?, ?it/s]

2%|▎ | 1/40 [00:00<00:13, 2.93it/s]

5%|▌ | 2/40 [00:00<00:13, 2.91it/s]

8%|▊ | 3/40 [00:01<00:12, 2.91it/s]

10%|█ | 4/40 [00:01<00:12, 2.91it/s]

12%|█▎ | 5/40 [00:01<00:12, 2.91it/s]

15%|█▌ | 6/40 [00:02<00:11, 2.91it/s]

18%|█▊ | 7/40 [00:02<00:11, 2.93it/s]

20%|██ | 8/40 [00:02<00:10, 2.93it/s]

22%|██▎ | 9/40 [00:03<00:10, 2.92it/s]

25%|██▌ | 10/40 [00:03<00:10, 2.92it/s]

28%|██▊ | 11/40 [00:03<00:09, 2.92it/s]

30%|███ | 12/40 [00:04<00:09, 2.91it/s]

32%|███▎ | 13/40 [00:04<00:09, 2.90it/s]

35%|███▌ | 14/40 [00:04<00:08, 2.90it/s]

38%|███▊ | 15/40 [00:05<00:08, 2.89it/s]

40%|████ | 16/40 [00:05<00:08, 2.90it/s]

42%|████▎ | 17/40 [00:05<00:07, 2.91it/s]

45%|████▌ | 18/40 [00:06<00:07, 2.91it/s]

48%|████▊ | 19/40 [00:06<00:07, 2.90it/s]

50%|█████ | 20/40 [00:06<00:06, 2.89it/s]

52%|█████▎ | 21/40 [00:07<00:06, 2.88it/s]

55%|█████▌ | 22/40 [00:07<00:06, 2.88it/s]

57%|█████▊ | 23/40 [00:07<00:05, 2.88it/s]

60%|██████ | 24/40 [00:08<00:05, 2.88it/s]

62%|██████▎ | 25/40 [00:08<00:05, 2.88it/s]

65%|██████▌ | 26/40 [00:08<00:04, 2.88it/s]

68%|██████▊ | 27/40 [00:09<00:04, 2.88it/s]

70%|███████ | 28/40 [00:09<00:04, 2.87it/s]

72%|███████▎ | 29/40 [00:10<00:03, 2.87it/s]

75%|███████▌ | 30/40 [00:10<00:03, 2.87it/s]

78%|███████▊ | 31/40 [00:10<00:03, 2.88it/s]

80%|████████ | 32/40 [00:11<00:02, 2.88it/s]

82%|████████▎ | 33/40 [00:11<00:02, 2.88it/s]

85%|████████▌ | 34/40 [00:11<00:02, 2.88it/s]

88%|████████▊ | 35/40 [00:12<00:01, 2.89it/s]

90%|█████████ | 36/40 [00:12<00:01, 2.89it/s]

92%|█████████▎| 37/40 [00:12<00:01, 2.88it/s]

95%|█████████▌| 38/40 [00:13<00:00, 2.88it/s]

98%|█████████▊| 39/40 [00:13<00:00, 2.87it/s]

100%|██████████| 40/40 [00:13<00:00, 2.96it/s]

ECE after BBQ Binning - 3.278%.

If you look closely at the predictions of the BBQScaler in this case, you will see that its prediction is based on equal-frequency bins. Since the number of classes is high, the bins mostly represent low-confidence values.

6. Histogram Binning: fit and evaluate#

Fit Histogram Binning on the calibration dataloader. Typical choices for num_bins are in [10, 20]; fewer bins -> smoother result, more bins -> more flexible. If you run on GPU you can pass device=torch.device(‘cuda’) or let the scaler infer the device from calibration data by passing device=None.

hist_scaler = HistogramBinningScaler(model=model, num_bins=10, device=None)

hist_scaler.fit(dataloader=calibration_dataloader)

# Evaluate histogram-binned model on the held-out test set

ece.reset()

with torch.no_grad():

for sample, target in test_dataloader:

# For multiclass this scaler is expected to return log-probabilities; apply softmax.

calibrated_out = hist_scaler(sample)

probs = calibrated_out.softmax(-1)

ece.update(probs, target)

print(f"ECE after Histogram Binning - {ece.compute():.3%}.")

fig, ax = ece.plot()

fig.tight_layout()

fig.show()

0%| | 0/40 [00:00<?, ?it/s]

2%|▎ | 1/40 [00:00<00:13, 2.86it/s]

5%|▌ | 2/40 [00:00<00:13, 2.80it/s]

8%|▊ | 3/40 [00:01<00:13, 2.78it/s]

10%|█ | 4/40 [00:01<00:12, 2.77it/s]

12%|█▎ | 5/40 [00:01<00:12, 2.77it/s]

15%|█▌ | 6/40 [00:02<00:12, 2.77it/s]

18%|█▊ | 7/40 [00:02<00:11, 2.77it/s]

20%|██ | 8/40 [00:02<00:11, 2.76it/s]

22%|██▎ | 9/40 [00:03<00:11, 2.75it/s]

25%|██▌ | 10/40 [00:03<00:10, 2.75it/s]

28%|██▊ | 11/40 [00:03<00:10, 2.74it/s]

30%|███ | 12/40 [00:04<00:10, 2.74it/s]

32%|███▎ | 13/40 [00:04<00:09, 2.74it/s]

35%|███▌ | 14/40 [00:05<00:09, 2.74it/s]

38%|███▊ | 15/40 [00:05<00:09, 2.74it/s]

40%|████ | 16/40 [00:05<00:08, 2.74it/s]

42%|████▎ | 17/40 [00:06<00:08, 2.74it/s]

45%|████▌ | 18/40 [00:06<00:08, 2.74it/s]

48%|████▊ | 19/40 [00:06<00:07, 2.74it/s]

50%|█████ | 20/40 [00:07<00:07, 2.74it/s]

52%|█████▎ | 21/40 [00:07<00:06, 2.74it/s]

55%|█████▌ | 22/40 [00:07<00:06, 2.73it/s]

57%|█████▊ | 23/40 [00:08<00:06, 2.74it/s]

60%|██████ | 24/40 [00:08<00:05, 2.74it/s]

62%|██████▎ | 25/40 [00:09<00:05, 2.75it/s]

65%|██████▌ | 26/40 [00:09<00:05, 2.75it/s]

68%|██████▊ | 27/40 [00:09<00:04, 2.75it/s]

70%|███████ | 28/40 [00:10<00:04, 2.73it/s]

72%|███████▎ | 29/40 [00:10<00:04, 2.73it/s]

75%|███████▌ | 30/40 [00:10<00:03, 2.74it/s]

78%|███████▊ | 31/40 [00:11<00:03, 2.75it/s]

80%|████████ | 32/40 [00:11<00:02, 2.75it/s]

82%|████████▎ | 33/40 [00:12<00:02, 2.75it/s]

85%|████████▌ | 34/40 [00:12<00:02, 2.75it/s]

88%|████████▊ | 35/40 [00:12<00:01, 2.75it/s]

90%|█████████ | 36/40 [00:13<00:01, 2.76it/s]

92%|█████████▎| 37/40 [00:13<00:01, 2.75it/s]

95%|█████████▌| 38/40 [00:13<00:00, 2.76it/s]

98%|█████████▊| 39/40 [00:14<00:00, 2.75it/s]

100%|██████████| 40/40 [00:14<00:00, 2.82it/s]

ECE after Histogram Binning - 10.536%.

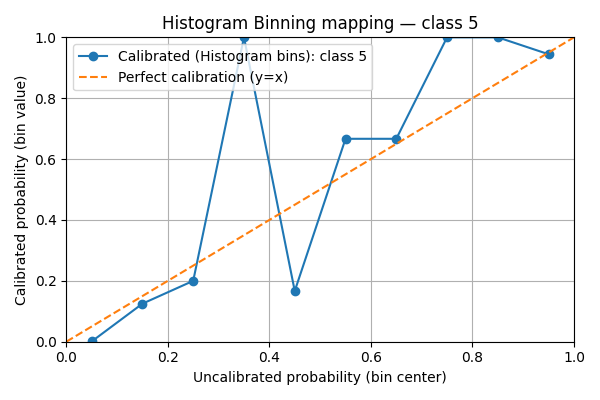

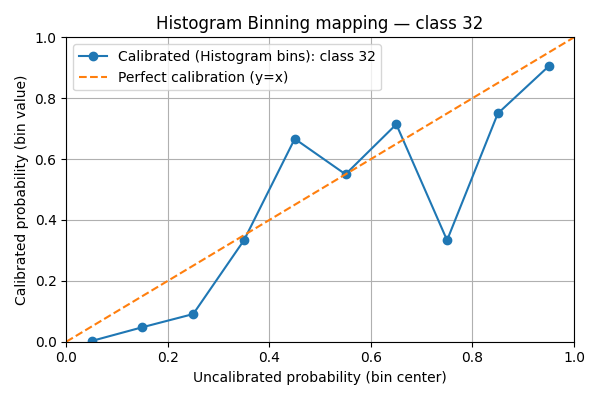

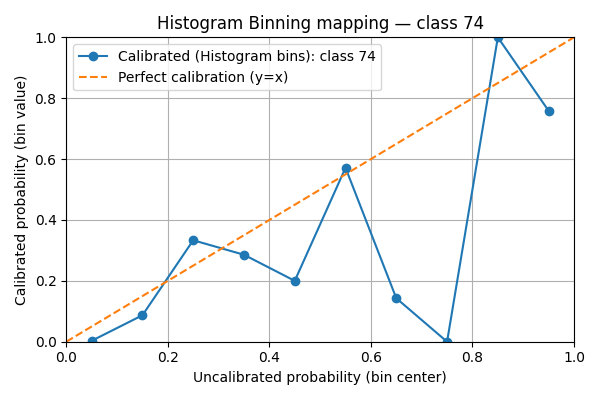

7. Visualize per-class histogram-binning mappings#

We plot the learned histogram-binning mapping for a handful of classes. For each selected class we show:

the bin centers (x-axis) vs. calibrated bin values (y-axis) as a line+marker

a reference diagonal y=x (perfect calibration)

marker size scaled by the number of calibration examples that fell into the bin

This visualization helps detect bins with very few samples (small markers) and whether the method systematically under- or over-estimates confidence.

- Notes:

The code uses matplotlib only and creates one figure per class.

If your scaler stores bin_edges and bin_values under different names, adjust accordingly.

We’ll pick 3 classes.

import matplotlib.pyplot as plt

bin_edges = hist_scaler.bin_edges.cpu().numpy() # (B+1,)

bin_centers = (bin_edges[:-1] + bin_edges[1:]) / 2.0 # (B,)

bin_values_all = hist_scaler.bin_values.cpu().numpy() # shape (C, B)

C = bin_values_all.shape[0]

B = bin_centers.shape[0]

classes_to_plot = [5, 32, 74]

# Now plot one figure per selected class

for c in classes_to_plot:

vals = bin_values_all[c] # shape (B,)

plt.figure(figsize=(6, 4))

# Line + markers for bin mapping

plt.plot(

bin_centers,

vals,

marker="o",

linestyle="-",

label=f"Calibrated (Histogram bins): class {c}",

)

# Reference diagonal

plt.plot([0.0, 1.0], [0.0, 1.0], linestyle="--", label="Perfect calibration (y=x)")

plt.xlabel("Uncalibrated probability (bin center)")

plt.ylabel("Calibrated probability (bin value)")

plt.xlim(0, 1)

plt.ylim(0, 1)

plt.title(f"Histogram Binning mapping — class {c}")

plt.grid(True)

plt.legend()

plt.tight_layout()

plt.show()

We see that the mappings are very irregular. This is due to the lack of data points compared to the number of classes. If the scores were uniform, there would be in average 5000 / 10 / 100 = 5 points per bin.

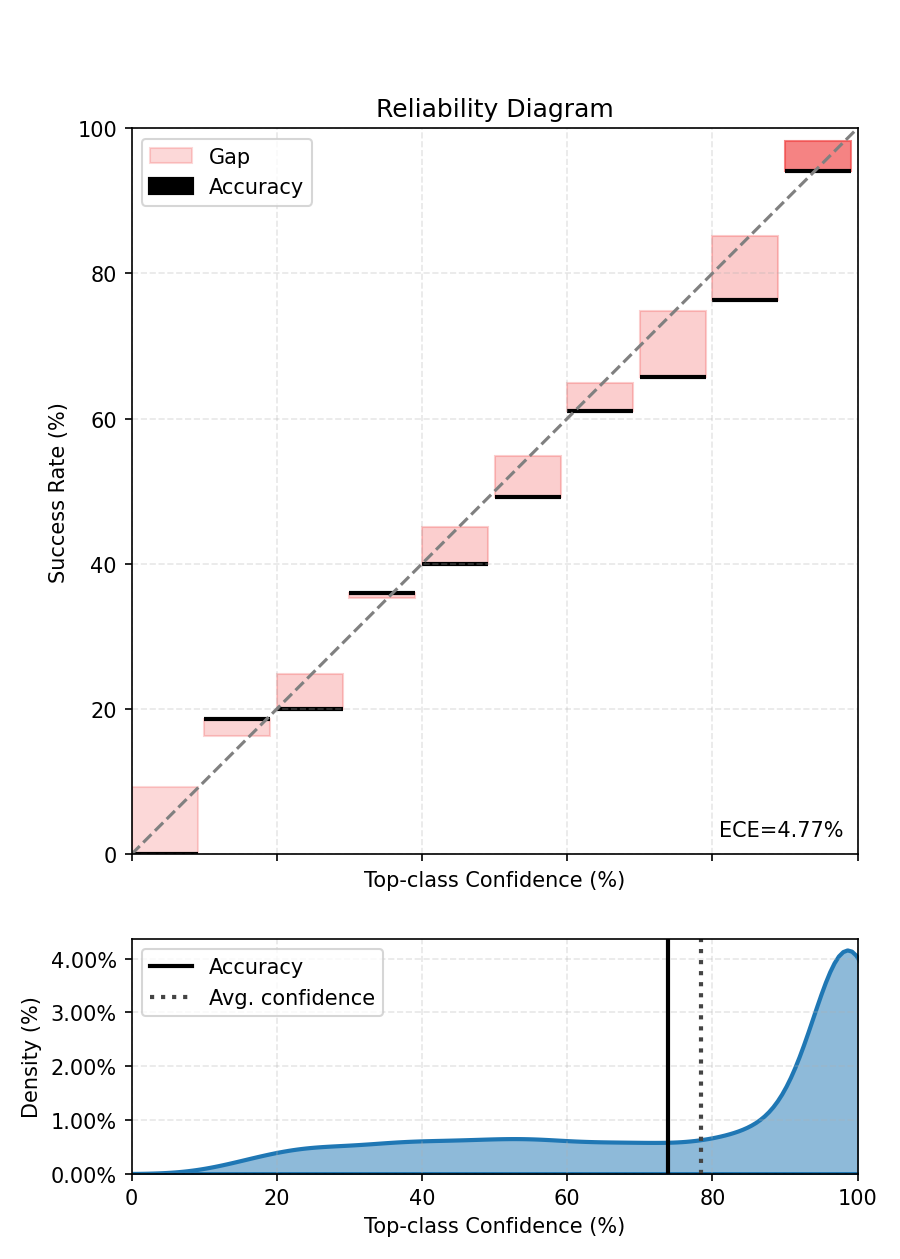

8. Isotonic Regression: fit and evaluate#

Fit an IsotonicRegressionScaler on the same calibration set to compare a monotonic non-parametric method with histogram binning. Isotonic regression fits a monotone mapping and tends to produce smoother calibration functions.

iso_scaler = IsotonicRegressionScaler(model=model)

iso_scaler.fit(dataloader=calibration_dataloader)

# Evaluate isotonic-calibrated model

ece.reset()

with torch.no_grad():

for sample, target in test_dataloader:

calibrated_out = iso_scaler(sample)

probs = calibrated_out.softmax(-1)

ece.update(probs, target)

print(f"ECE after Isotonic calibration - {ece.compute():.3%}.")

fig, ax = ece.plot()

fig.tight_layout()

fig.show()

0%| | 0/40 [00:00<?, ?it/s]

2%|▎ | 1/40 [00:00<00:13, 2.88it/s]

5%|▌ | 2/40 [00:00<00:13, 2.73it/s]

8%|▊ | 3/40 [00:01<00:13, 2.74it/s]

10%|█ | 4/40 [00:01<00:13, 2.71it/s]

12%|█▎ | 5/40 [00:01<00:12, 2.70it/s]

15%|█▌ | 6/40 [00:02<00:12, 2.71it/s]

18%|█▊ | 7/40 [00:02<00:12, 2.71it/s]

20%|██ | 8/40 [00:02<00:11, 2.71it/s]

22%|██▎ | 9/40 [00:03<00:11, 2.71it/s]

25%|██▌ | 10/40 [00:03<00:11, 2.71it/s]

28%|██▊ | 11/40 [00:04<00:10, 2.69it/s]

30%|███ | 12/40 [00:04<00:10, 2.62it/s]

32%|███▎ | 13/40 [00:04<00:10, 2.58it/s]

35%|███▌ | 14/40 [00:05<00:10, 2.55it/s]

38%|███▊ | 15/40 [00:05<00:09, 2.53it/s]

40%|████ | 16/40 [00:06<00:09, 2.52it/s]

42%|████▎ | 17/40 [00:06<00:09, 2.51it/s]

45%|████▌ | 18/40 [00:06<00:08, 2.51it/s]

48%|████▊ | 19/40 [00:07<00:08, 2.50it/s]

50%|█████ | 20/40 [00:07<00:08, 2.49it/s]

52%|█████▎ | 21/40 [00:08<00:07, 2.50it/s]

55%|█████▌ | 22/40 [00:08<00:07, 2.49it/s]

57%|█████▊ | 23/40 [00:08<00:06, 2.49it/s]

60%|██████ | 24/40 [00:09<00:06, 2.49it/s]

62%|██████▎ | 25/40 [00:09<00:06, 2.49it/s]

65%|██████▌ | 26/40 [00:10<00:05, 2.48it/s]

68%|██████▊ | 27/40 [00:10<00:05, 2.47it/s]

70%|███████ | 28/40 [00:10<00:04, 2.47it/s]

72%|███████▎ | 29/40 [00:11<00:04, 2.47it/s]

75%|███████▌ | 30/40 [00:11<00:04, 2.47it/s]

78%|███████▊ | 31/40 [00:12<00:03, 2.47it/s]

80%|████████ | 32/40 [00:12<00:03, 2.46it/s]

82%|████████▎ | 33/40 [00:12<00:02, 2.45it/s]

85%|████████▌ | 34/40 [00:13<00:02, 2.45it/s]

88%|████████▊ | 35/40 [00:13<00:02, 2.45it/s]

90%|█████████ | 36/40 [00:14<00:01, 2.45it/s]

92%|█████████▎| 37/40 [00:14<00:01, 2.47it/s]

95%|█████████▌| 38/40 [00:14<00:00, 2.47it/s]

98%|█████████▊| 39/40 [00:15<00:00, 2.47it/s]

100%|██████████| 40/40 [00:15<00:00, 2.60it/s]

ECE after Isotonic calibration - 4.746%.

9. Practical guidance#

Takeaways:

BBQ averages multiple equal-frequency histograms weighted by the model posterior; it reduces overfitting relative to a single histogram. It still needs enough calibration data — in multiclass settings prefer simpler schemes or temperature-scaling when data is scarce.

Histogram binning is flexible and non-parametric; it replaces predicted probabilities with bin-wise empirical accuracies. It can correct complex miscalibration patterns but may produce discontinuities. It needs a lot of data, especially with a large number of classes as in this example.

Isotonic regression enforces monotonicity (calibrated probability increases with model confidence) and typically yields smoother mappings than plain histogram binning; it can be advantageous when monotonicity is desirable.

Practical tips:

Visualize the learned mapping: inspect bin values for histogram binning or the monotone piecewise mapping for isotonic to detect overfitting.

If calibration data is scarce, prefer low-parameter approaches (temperature scaling) or reduce num_bins.

If dataset shift is expected, measure calibration across multiple held-out splits or OOD datasets.

References#

Zadrozny, B., & Elkan, C. (2001). Obtaining calibrated probability estimates from decision trees and naive Bayesian classifiers. ICML 2001. <https://cseweb.ucsd.edu/~elkan/calibrated.pdf>

Guo, C., Pleiss, G., Sun, Y., & Weinberger, K. Q. (2017). On calibration of modern neural networks. ICML 2017. <https://arxiv.org/pdf/1706.04599.pdf>

Naeini, M. P., Cooper, G. F., & Hauskrecht, M. (2015). Obtaining Well Calibrated Probabilities Using Bayesian Binning. AAAI 2015. <https://arxiv.org/pdf/1411.0160.pdf>

Total running time of the script: (2 minutes 7.661 seconds)